GraphRAG: The Evolution of RAG in AI Knowledge Management Systems

The integration of advanced techniques for knowledge management has become paramount in the rapidly evolving path of artificial intelligence (AI). One such innovation is GraphRAG, which enhances traditional Retrieval-augmented generation (RAG) systems by incorporating knowledge graphs and ontologies.

Knowledge graphs are structured networks of interconnected entities and facts, while ontologies provide the formal framework of rules and categories that define how those concepts relate to one another. In this guide, we delve into the intricacies of GraphRAG, exploring its significance, functionality, and transformative potential in knowledge management.

What Is GraphRAG?

GraphRAG is an advanced retrieval framework that marries the reasoning capabilities of large language models (LLMs) with the structured precision of knowledge graphs. Unlike standard retrieval systems that search for isolated text fragments based on keyword or semantic similarity, this method maps out data as a network of interconnected entities and relationships.

The graph-based structure allows the AI to navigate complex, multi-hop connections and generate comprehensive summaries of massive datasets that traditional methods might overlook. The system essentially builds a conceptual map of information, enabling it to provide more accurate, context-aware answers that reflect the true depth of the underlying knowledge base.

What Is The Role of Knowledge Graphs in GraphRAG

In GraphRAG, knowledge graphs serve as the structural backbone that connects entities and their relationships, giving the AI a map to navigate rather than a pile of documents to search through. Rather than scanning isolated text for keywords or semantic similarity, the system follows logical paths between concepts, the same way a subject-matter expert would reason through a problem.

A knowledge graph represents this structure as a network of real-world knowledge, with nodes representing entities and edges representing their relationships. This structure allows for:

- Flexible data representation: Knowledge graphs can easily adapt to new information, accommodating changes without disrupting the entire system.

- Semantic understanding: Each relationship in a knowledge graph carries meaning, enabling the AI to traverse the graph and retrieve relevant information based on context.

- Multi-hop queries: Knowledge graphs support complex queries that traverse multiple relationships, enabling AI to gather comprehensive insights.

Leveraging these structural advantages allows GraphRAG to transcend the limitations of simple keyword matching by identifying deep-seated patterns within a dataset. Its interconnected framework provides the context needed for AI models to generate accurate, nuanced responses.

How Did GraphRAG Evolve from RAG?

The transition from RAG to GraphRAG in AI Knowledge Management represents a strategic shift from simple document retrieval to a structured, conceptual understanding of an organization’s internal intelligence.

Retrieval-augmented generation (RAG) is a technique that combines LLM mechanisms with data sources to generate accurate, contextually relevant responses. It is a cornerstone of modern AI implementations in external generative AI tools, internal knowledge management systems, conversational AI tools, and intelligent search.

Traditional RAG, however, relies on vector similarity to identify relevant text chunks, but this often fails to capture intricate relationships or global themes across the entire dataset. Benchmarks by FalkorDB in 2025 showed that while Vector RAG scored nearly 0% accuracy on schema-bound queries, optimized GraphRAG implementations achieved over 90% accuracy.

To bridge the gap, GraphRAG integrates knowledge graphs that map out data as a network of nodes and edges, allowing the system to follow logical paths between disparate pieces of information. It enables the model to perform multi-hop reasoning and provide high-level summaries, transforming a collection of flat files into a sophisticated, navigable brain.

The RAG process and its limitations

The RAG process typically involves several key steps to transform static data into actionable insights. It relies on mathematical representations of text to bridge the gap between human language and machine processing. Engineers follow a structured path to ensure the model has access to the most relevant information before generating an output.

How RAG works

-

Indexing knowledge The system breaks down a collection of documents into smaller text chunks, creating vector embeddings that represent the meaning of each piece of text. These embeddings are stored in a vector database for efficient retrieval.

-

Query embedding When a user poses a question, the system converts the query into a vector embedding using the same method applied during indexing.

-

Similarity search The system compares the query vector against stored vectors to identify the most relevant text chunks.

-

Response generation The language model combines the user’s question with the retrieved snippets to generate a coherent answer.

Despite its advantages, traditional RAG systems face several challenges. These limitations highlight the need for a more sophisticated approach to knowledge management:

- Disconnected information: RAG treats retrieved documents as isolated text passages, making it difficult for the model to synthesize information across multiple sources.

- Lack of contextual understanding: RAG primarily relies on semantic similarity for retrieval, which does not inherently capture the relationships between different pieces of information.

- Inconsistent responses: The absence of a structured reasoning framework can lead to inconsistencies in the generated answers, particularly for complex queries that require deeper analysis.

GraphRAG prevents the loss of context that occurs when documents are sliced into isolated, disconnected fragments. The resulting output provides a comprehensive narrative rather than a disjointed collection of facts. IBM reports indicate that GraphRAG can achieve higher comprehensiveness (72–83%) while needing up to 97% fewer tokens for root-level summaries compared to traditional RAG.

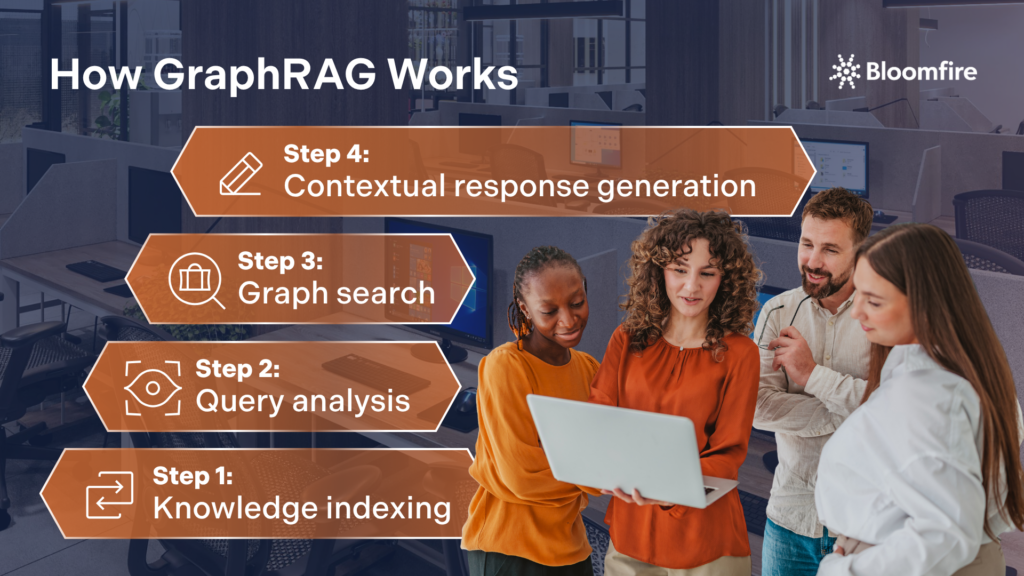

How GraphRAG Works

The GraphRAG process builds upon the traditional RAG framework by incorporating knowledge graphs at various stages of information retrieval and response generation. Its architecture allows the system to synthesize insights from multiple internal sources, ensuring that complex organizational queries are answered with a holistic view rather than isolated snippets of data. To do this, GraphRAG follows these steps:

Step 1: Knowledge indexing

In GraphRAG, both structured and unstructured data are indexed. Structured data, such as databases, is transformed into triples, while unstructured data is segmented into manageable text chunks. During this process, entities and relationships are extracted, and embeddings are calculated to create a comprehensive knowledge graph.

Step 2: Query analysis

When a user submits a query, the system analyzes the key terms and entities within the question. This analysis helps identify relevant nodes in the knowledge graph that can provide additional context for the response.

Step 3: Graph search

Instead of relying solely on semantic similarity, GraphRAG queries the knowledge graph for related entities and relationships. This allows the system to retrieve interconnected facts, enhancing the depth and accuracy of the generated response.

Step 4: Contextual response generation

The generative model uses the user’s query, along with enriched context from the knowledge graph, to produce a well-informed answer. This process ensures that the AI can synthesize information from multiple sources, leading to more coherent and insightful responses.

It’s worth noting that GraphRAG doesn’t replace vector search; in most production implementations, the two work together. Vector search handles semantic similarity, surfacing text chunks that are conceptually close to the user’s query. The knowledge graph then adds structural reasoning on top, tracing the relationships between those results to provide deeper context.

This hybrid approach captures both the nuance of language and the logic of connections, giving the AI a richer foundation than either method could provide on its own. Organizations building on Bloomfire’s knowledge infrastructure benefit from this architecture by default: the knowledge base provides the structured, relationship-aware foundation that enables hybrid GraphRAG retrieval.

Implementing these steps ensures that every generated answer is rooted in a factual, interconnected web of logic rather than fragmented data points. Advanced knowledge management strategies further amplify this process, turning static information into a dynamic asset for informed decision-making.

What Are the Advantages of GraphRAG in Knowledge Management?

Leveraging structured relationships alongside traditional retrieval methods allows organizations to unlock deeper insights from their data. This is the foundation of GraphRAG’s advantages over RAG’s.

GraphRAG offers several key benefits that enhance knowledge management practices across various industries.

1. Improved contextual understanding

Integrating knowledge graphs allows GraphRAG to elevate the best knowledge management systems by disambiguating complex context with high precision. For instance, if a user queries “Jaguar” within a corporate database, the graph structure immediately clarifies whether the intent refers to the automotive manufacturer or the biological species. This relational mapping ensures that internal AI tools provide the exact context required for accurate, professional-grade responses.

2. Enhanced reasoning capabilities

GraphRAG allows AI systems to perform multi-hop reasoning, enabling them to connect related facts and derive insights that would be challenging to obtain through traditional RAG methods. This capability is particularly valuable for complex queries that require a deeper understanding of relationships.

3. Consistency and explainability

The structured nature of knowledge graphs ensures that AI-generated responses are consistent and reliable. GraphRAG enhances explainability, allowing users to understand the reasoning behind the AI’s conclusions by tracing the nodes and edges used to derive an answer.

4. Reduced hallucinations

Generative AI tools often hallucinate answers when they lack specific context or when information is siloed across different sections of a database. Since GraphRAG retrieves data based on hard-coded relationships (triples of Subject-Predicate-Object), the ground truth is more structured. This provides a factual skeleton to build its response upon, significantly increasing accuracy.

5. Better handling of data noise

Within a massive knowledge base, many documents might use similar terminology but be irrelevant to a query’s specific intent. To address this chaos, GraphRAG uses the connectivity among nodes to determine relevance. If a node is disconnected from the main topic of your query, the system knows it’s likely irrelevant, even if the keywords match.

These advantages have been tracked in multiple studies. In one research that highlights KG-LLM-Bench as a benchmark for screening LLM reasoning, it showed that GraphRAG achieved over 50% higher accuracy than standard RAG on benchmarks requiring multiple reasoning steps (multi-hop queries).

Strategic implementation ensures that information is not only easily retrievable but also deeply rooted in the business’s unique domain expertise. These combined strengths ultimately provide a competitive edge by fostering a more reliable and transparent AI ecosystem across the entire enterprise.

What Are the Challenges and Considerations of GraphRAG Implementation?

While GraphRAG offers numerous advantages, it also presents challenges in implementation. For instance, the computational overhead and complexity involved in constructing and maintaining a high-fidelity knowledge graph can be significant compared to traditional vector-based retrieval methods. In the real-world setting, this can be translated to the following obstacles:

1. Complexity of knowledge graph construction

Building and maintaining a knowledge graph requires careful planning and expertise. Organizations must invest time and resources into defining ontologies, extracting relevant information or data, and ensuring the accuracy of relationships.

2. Data privacy and compliance

As with any AI system, data privacy and compliance are critical considerations. Organizations must ensure they adhere to regulations on data use and protection, particularly when handling sensitive information.

3. Continuous updates and maintenance

Knowledge graphs must be regularly updated to reflect new information and changes in relationships. Organizations need to establish processes for maintaining the accuracy and relevance of their knowledge graphs over time.

This is exactly the challenge a KM platform like Bloomfire is built to solve. Features like automated knowledge health checks and self-healing knowledge base tools actively monitor and flag content that has gone stale or inconsistent, so the structural integrity of your knowledge graph doesn’t depend on someone remembering to update it. Combined with built-in security and compliance controls, Bloomfire gives knowledge management teams a stable, maintained foundation for GraphRAG, without the manual overhead of upkeep.

What Are the Future Directions for GraphRAG?

The future of GraphRAG holds exciting possibilities as AI and data management continue to advance. Technological breakthroughs in LLMs will likely refine the precision of relationship extraction, transforming how deep contextual connections are mapped across massive datasets. That said, we are looking at the following potential actions to further improve GraphRAG.

Automation of knowledge graph creation

Technologies, such as natural language processing and machine learning, may enable the automated generation of knowledge graphs from unstructured data sources. This could streamline the process of building and maintaining knowledge graphs, making them more accessible to organizations.

Integration with other AI technologies

GraphRAG can be further enhanced by integrating with other AI technologies, such as machine learning algorithms and predictive analytics. This combination could lead to even more sophisticated insights and decision-making capabilities.

Expansion into new domains

As organizations across various industries recognize the value of GraphRAG, its applications are likely to expand into new domains, including healthcare, finance, and education. The ability to connect and synthesize information from diverse sources will be invaluable in these fields.

Enhanced real-time adaptation

Dynamic graph synchronization techniques will likely allow systems to incorporate live data streams in real time, ensuring the retrieval process reflects the most current information available. This evolution minimizes the latency between data ingestion and insight generation, providing a more responsive experience for time-sensitive applications.

Cross-modal knowledge synthesis

Future iterations may bridge the gap between different data formats, linking visual, auditory, and textual information within a single unified graph structure. Such a multidimensional approach enables the AI to understand complex relationships across varied media types, significantly enriching the context provided during the retrieval phase.

Organizations that embrace these evolving graph architectures will gain a decisive competitive advantage through superior knowledge discovery and reasoning. This trajectory suggests a future in which GraphRAG serves as the cognitive backbone of truly intelligent, context-aware enterprise systems.

Not Just a “Nice-to-Have:” GraphRAG in KMS

GraphRAG represents an advancement in knowledge management, combining the strengths of traditional RAG systems with the structured knowledge representation of knowledge graphs. As organizations continue to explore the potential of GraphRAG, its applications are set to transform knowledge management practices across various industries, paving the way for a more informed and connected future. Thus, a KMS without GraphRAG misses in providing an intelligent search that fosters a deeper understanding of the relationships between information.

Reliable Intelligence on Demand

Bridge the gap between data and truth with AI that prioritizes content freshness.

Trust Your Intelligence

Enterprises often deal with siloed information that requires understanding high-level relationships across different documents. This methodology allows systems to answer global questions about a dataset rather than just finding specific text snippets.

Staff receive more contextually aware answers that explain the reasoning and connections behind the data. This transparency builds trust in the internal knowledge base and speeds up decision-making processes.

The graph structure enables the retriever to traverse multi-hop relationships that a simple semantic search might miss. It provides a map of how concepts are linked, ensuring the retrieved context is both relevant and comprehensive.

The LLM is anchored to a factual, structured backbone of verified entities and relationships. Providing this explicit structure limits the model’s tendency to invent connections that do not exist in the source material.

New information can be integrated by adding new nodes and edges to the existing graph structure. This incremental updating ensures the knowledge management system stays current without a full re-indexing of the entire library.

Many modern architectures use a hybrid approach that combines vector embeddings for local similarity and graphs for global structure. Using both methods together captures both the nuance of language and the logic of relationships.

What Is a Knowledge Base Article?

How Knowledge Graphs Work in Enterprise AI

Estimate the Value of Your Knowledge Assets

Use this calculator to see how enterprise intelligence can impact your bottom line. Choose areas of focus, and see tailored calculations that will give you a tangible ROI.

Take a self guided Tour

See Bloomfire in action across several potential configurations. Imagine the potential of your team when they stop searching and start finding critical knowledge.